How to Create Website Mockups People Actually Love

Learn how to create website mockups that bridge the gap between idea and reality. Our guide covers tools, principles, and developer handoff.

Build beautiful websites like these in minutes

Use Alpha to create, publish, and manage a fully functional website with ease.

At its core, A/B testing is a straightforward experiment. You take two versions of something—a webpage, an email, an ad—and pit them against each other to see which one performs better. Version A is your control (the original), and Version B is the variation you want to test.

Think of it like an audience poll. You show half your audience one version and the other half the second version. The winner is decided not by opinion, but by actual, measurable user behavior.

Why A/B Testing Is Your Secret Weapon

Ever bake cookies and wonder if a different kind of chocolate chip would make them better? You could guess, or you could bake two small batches and have your friends do a blind taste test. That's exactly what A/B testing does for your marketing. It swaps out guesswork and gut feelings for cold, hard data.

This process gives you the power to make confident decisions that directly lead to more clicks, sign-ups, and sales.

This isn't just a game for big corporations with massive budgets. It’s a crucial tool for any entrepreneur or business owner. Instead of sitting in a meeting wondering if a new headline will resonate, you can test it. Instead of debating the color of a "Buy Now" button, you can measure its real-world impact. This cycle of testing, learning, and iterating is the fastest way to figure out what your audience actually wants.

Ultimately, it’s all about learning how to improve conversion rate on your website and getting more visitors to take the actions you care about.

Before we dive deeper, here’s a quick breakdown of the core concepts.

A/B Testing at a Glance

Component | Description |

|---|---|

Control (A) | The original, unchanged version of your page, email, or ad. This is your baseline for comparison. |

Variation (B) | The modified version where you've changed a single element, like the headline or call-to-action button. |

Hypothesis | A clear statement about what you expect to happen. For example, "Changing the button color to green will increase clicks." |

Traffic Splitting | Dividing your audience randomly to show some the Control and others the Variation, ensuring a fair test. |

Key Metric | The specific goal you're measuring, such as click-through rate, form submissions, or sales. |

This simple framework is the engine for continuous improvement.

The Power of Data-Driven Decisions

One of the most expensive mistakes any business can make is running on assumptions. A/B testing is your safety net—it gives you real evidence to back up your ideas before you commit to rolling them out everywhere. It’s a low-risk, high-reward way to innovate and make sure every change you make is a step in the right direction.

By systematically testing one variable at a time—such as a headline, an image, or a call-to-action—you can pinpoint exactly what influences user behavior and make incremental improvements that lead to significant gains over time.

This disciplined approach isn't just a trend; it's becoming standard practice for a reason. Today, about 77% of companies are running A/B tests on their websites. This number has shot up in recent years, showing a clear industry shift toward making data, not just intuition, the final arbiter. If you're curious about adoption rates, you can find more insights on VWO.com.

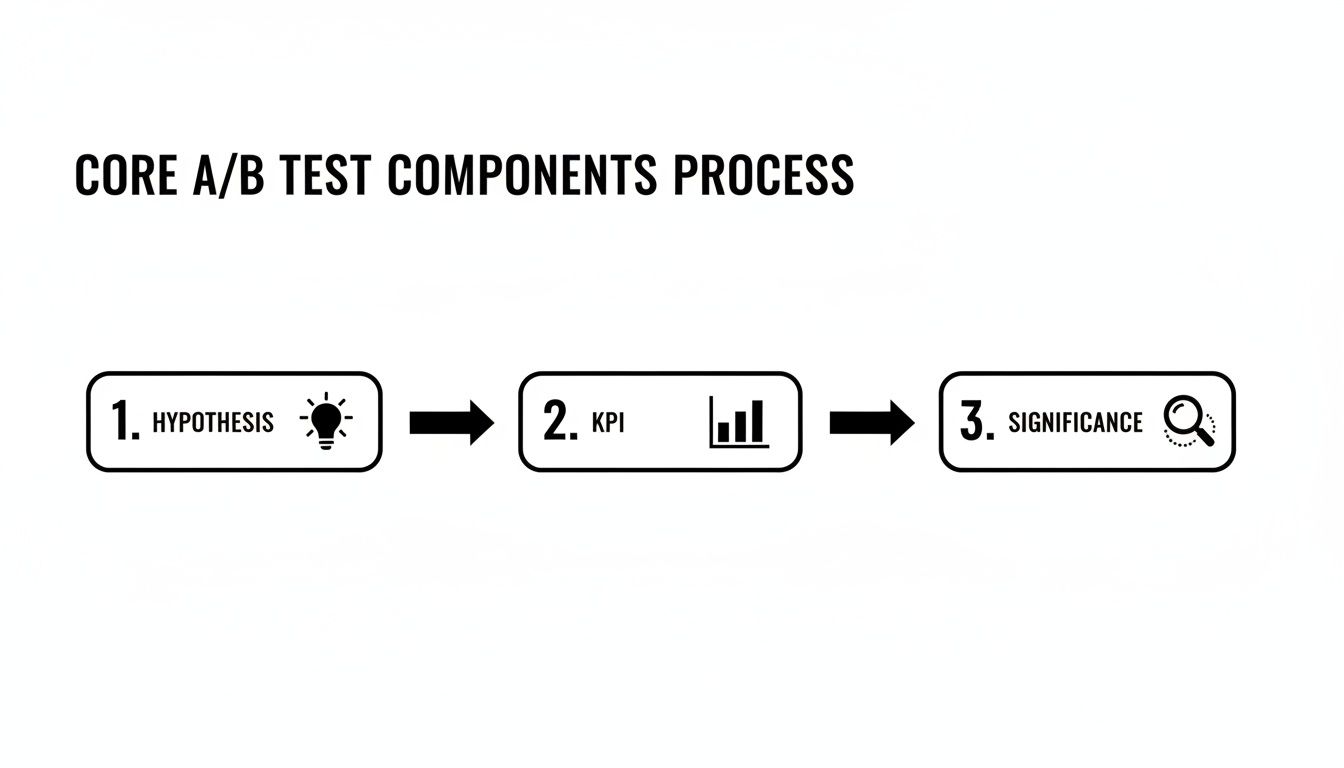

The Core Components of a Successful A/B Test

Jumping into an A/B test without a solid plan is like starting a road trip without a map—you might end up somewhere, but probably not where you intended. To get results you can actually trust, you need to build your experiment on a few foundational pillars.

Think of these components as the recipe for your test. Each ingredient is crucial. Miss one, and you’ll end up with a mess instead of a clear, actionable insight into what your audience really wants.

Start with a Strong Hypothesis

Before you change a single pixel on your page, you need a hypothesis. This is just a fancy term for an educated guess that frames your entire experiment. It’s a clear, simple statement that lays out the change you’re making, the result you expect, and—most importantly—why you expect it.

A vague goal like "improve the homepage" is useless. You need to get specific.

A strong hypothesis looks something like this: “Changing the call-to-action (CTA) button color from grey to bright orange will increase form submissions because the new color has higher contrast and will grab the user's attention more effectively.”

See the difference? This format forces you to justify the test. It connects a specific action to a measurable result and a logical reason, turning a random shot in the dark into a strategic experiment.

Identify Your Key Performance Indicators

Once your hypothesis is set, you need to decide how you'll keep score. This is your Key Performance Indicator (KPI), the one metric that will ultimately determine whether version A or version B is the winner. It's critical to stick to one primary KPI to avoid confusing, contradictory results.

Common KPIs in A/B testing include:

Conversion Rate: The percentage of visitors who complete a goal, like buying a product or signing up.

Click-Through Rate (CTR): The percentage of people who click on a specific button or link.

Bounce Rate: The percentage of visitors who land on your page and leave without doing anything else.

The right KPI flows directly from your hypothesis. If you're testing a new headline to keep people engaged, your KPI is probably bounce rate. If you're testing a checkout button, it's all about the conversion rate.

Statistical significance is your quality control. It’s the mathematical proof that your results weren't just a random fluke. Aiming for at least a 95% confidence level ensures that you can trust the outcome and make business decisions based on real evidence, not chance.

Choose High-Impact Elements to Test

Finally, you need to focus your energy where it counts. You could test nearly anything on your site, but some elements pack a much bigger punch when it comes to influencing user behavior. You can use tools to learn more about how website heat mapping tools help pinpoint these high-traffic, high-impact areas.

Here are some of the best places to start your testing journey:

Headlines and Subheadings: This is your first impression. A killer headline can be the difference between someone staying or bouncing in seconds.

Call-to-Action (CTA) Buttons: The words you use ("Get Started" vs. "Sign Up Free"), the color, the size, and even the placement can have a massive impact.

Images and Videos: The right visual can communicate your value proposition faster and more effectively than a wall of text ever could.

Page Layout and Navigation: Sometimes, the biggest wins come from simply making it easier for people to find what they're looking for. A simplified layout can do wonders for the user experience.

By focusing on these core components—a clear hypothesis, a single KPI, and a high-impact element—you set the stage for an A/B test that delivers valuable, reliable insights every single time.

How to Run Your First A/B Test Step by Step

Alright, enough theory. Let's get our hands dirty. Running your first A/B test is actually a pretty straightforward process that lets you stop guessing and start knowing what works. This guide will walk you through launching a simple, effective experiment from start to finish.

Step 1: Identify Your Target

First things first, you need to decide what to test. Don't just throw a dart at a board. The best place to start is with a page that gets a lot of traffic but has a surprisingly low conversion rate. Your website analytics are your treasure map here.

A product page with thousands of visitors but only a handful of sales? That’s a perfect candidate. By focusing on these high-potential pages, you get the quickest path to meaningful results and a real chance to improve your bottom line.

Step 2: Create a Variation

Once you've picked your page, it's time to build your "B" version—the variation. Based on the hypothesis you came up with, make one single, focused change. I know it's tempting to tweak a bunch of things at once, but resist! If you change the headline, the button color, and the main image, you'll have no idea which change actually made the difference.

Simple changes can have a huge impact. Think about trying things like:

Rewriting a headline: Pit your current headline against one that's more benefit-driven.

Changing a CTA button: Experiment with different text ("Buy Now" vs. "Get Started"), colors, or even placement.

Swapping an image: See if a different hero image does a better job of grabbing your visitors' attention.

This whole process is about structure. You start with a smart idea (your hypothesis), define how you'll measure success (your KPI), and then run the test until the numbers are solid enough to trust (statistical significance).

This framework is what turns a random idea into a measurable, scientific experiment.

Step 3: Set Up the Test and Split Traffic

Now you'll use an A/B testing tool to get the experiment running. Most modern platforms, including the analytics tools built into Alpha, make this part easy. You just define your control (Version A) and your variation (Version B), and then tell the tool to split your incoming traffic right down the middle.

Your tool will randomly show 50% of visitors the original page and the other 50% the new version. This randomization is absolutely critical for a fair test, as it smooths out any weird variables that could skew your data.

Step 4: Analyze the Results

Let the test run until it has gathered enough data, then it’s time for the fun part: seeing what happened. Your testing tool will have a performance dashboard waiting for you.

Here, you’ll see which version drove more conversions, clicks, or whatever metric you were tracking. Look for the version that has a statistically significant lead and declare it the winner. If you're interested in digging deeper, our guide on conversion rate optimization strategies is a great next step.

Important: Whatever you do, don't stop the test too early! It's so tempting to call it after a day or two when one version is pulling ahead, but early results can be incredibly misleading. You need to let the test run for at least a full business cycle—usually a week—to account for natural dips and peaks in user behavior.

Once you have a clear winner, it's time to implement the successful change for all your visitors. You’ve just made a real, data-backed improvement to your website. Now, rinse and repeat. Come up with a new hypothesis and get the next test rolling.

Real-World A/B Testing Wins You Can Learn From

Theory is one thing, but seeing A/B testing in action is where it really clicks. The most successful companies on the planet don’t operate on hunches or gut feelings—they test, measure, and iterate their way to the top. These examples show just how powerful small, intentional changes can be.

Many of the most famous wins come from the tech giants who have built experimentation into their DNA. For instance, Dell famously achieved a massive 300% conversion lift on a critical page. Enterprise software leader SAP wasn't far behind, boosting engagement with their call-to-action by 32.5% simply by simplifying their landing page design.

These aren't just one-off lucky breaks. Microsoft's Bing reportedly runs over 1,000 tests every month, which is credited with driving a 10-25% increase in revenue per search. Meanwhile, companies like Google and Booking.com run over 10,000 experiments annually to constantly polish their user experience. If you want to dive deeper into these big-league wins, a market analysis from Future Market Insights has some great data.

Translating Big Wins to Small Business

The good news? You don't need a huge budget or a team of data scientists to get in on the action. The logic behind A/B testing scales down perfectly for entrepreneurs and small businesses, where every single conversion makes a difference.

Think about how this plays out in the real world for a smaller company:

An online store tests two product photo styles: one on a clean, white background versus another showing the product in a real-life context. The goal is simple: which one gets more "Add to Cart" clicks?

A consultant tries out two versions of their services page. Version A uses bullet points to list features, while Version B frames them as benefits in short, compelling paragraphs. The success metric is the number of visitors who book a consultation.

A local restaurant tests two headlines on its online ordering page. "Order Your Favorites Now" goes up against "Hot and Fresh Pizza, Delivered in 30 Minutes." They measure which one drives more completed orders.

In every one of these scenarios, the business isn't just making a random change. It's asking a specific question and letting its customers provide the answer through their actions. This is how you stop guessing and start building a website that genuinely works.

The goal isn't just to copy what big companies do, but to adopt their mindset of continuous improvement. A/B testing is the tool that lets you systematically discover what your specific audience responds to, turning your website into a powerful, data-driven growth engine.

These small, focused experiments really do add up. Finding a winning headline might only bump leads by 5%, but when you apply that learning across your entire site, the cumulative impact can be huge. For more practical advice, check out our detailed guide on A/B testing for landing pages and start running your own high-impact experiments.

Common A/B Testing Mistakes and How to Avoid Them

Running an A/B test is pretty straightforward, but running one that gives you trustworthy results? That takes discipline. It's easy to get excited and chase quick wins, but many marketers fall into the same traps that can completely tank their results. Knowing what these pitfalls are is the first step toward building a testing program that actually drives growth.

Testing Too Many Variables at Once

This is probably the most common mistake in the book. It's so tempting to design a new version with a slick headline, a bold new hero image, and a completely different call-to-action button all at once. But here's the problem: if that version wins, you have absolutely no idea why.

Was it the killer headline? The eye-catching image? The button color? You'll never know for sure. The only way around this is to be patient and test one isolated element at a time. It’s a methodical approach, but it’s the only way to draw a clear line between a specific change and how your users responded.

Ending a Test Too Soon

Another huge mistake is calling a test early. You might see one version rocket ahead after just a day or two and be tempted to declare a winner. This is a classic rookie error, and it almost always leads to making decisions based on random noise, not a real trend.

Think about it: user behavior changes. Traffic on a Tuesday morning is a whole different ballgame than traffic on a Saturday night.

To get data you can actually rely on, you have to let your test run for a full business cycle. That usually means at least one to two weeks. This gives you time to capture the full spectrum of user behavior and ensures your results have reached statistical significance—a fancy way of saying you can be confident the outcome wasn't just a fluke.

Ignoring Outside Factors

Your A/B test isn't happening in a bubble. The world keeps spinning, and external events can have a massive impact on your results. Did you launch a huge social media campaign halfway through the test? Did a holiday weekend send an unusual flood of traffic your way?

These kinds of events can easily skew your data and point you in the wrong direction. Before you even look at the numbers, take a second to think about what else was going on. If a major event interfered, you might need to let the test run longer or even scrap the results and start fresh.

Fearing a "Failed" Test

Let's get one thing straight: there's no such thing as a failed test. Many people see a test where the new version performs worse than the original as a complete waste of time. Nothing could be further from the truth.

An experiment that doesn't produce a lift isn't a failure; it's a learning opportunity.

Every single test, win or lose, teaches you something valuable about your audience. A "losing" variation tells you exactly what doesn't work for your customers, which is just as important as knowing what does. That insight helps you form smarter hypotheses for your next test, getting you one step closer to a real breakthrough. By sidestepping these common mistakes, you can ensure your approach to what is A/B testing in marketing is built on solid ground, giving you insights you can act on with real confidence.

A Few Common Questions About A/B Testing

As you start exploring experimentation, you're bound to run into a few common questions. Let's clear up some of the practical details so you can start testing with confidence.

How Long Should an A/B Test Run?

This is a classic "it depends" question, but I can give you some solid guideposts. The right duration really hinges on your website's traffic and your typical conversion rate. The biggest mistake people make is calling a test too early. You need enough data to hit statistical significance, which is just a fancy way of saying you can trust the results aren't a fluke.

For most sites, a good rule of thumb is to run a test for at least one to two full weeks. This helps smooth out the weird quirks in user behavior, like the difference between how people browse on a Tuesday morning versus a Saturday night. A full business cycle gives you a much more reliable picture.

What's the Difference Between A/B Testing and Multivariate Testing?

Good question. They're related, but they solve different problems.

Think of it like this:

A/B testing is a simple head-to-head competition. You pit Version A against Version B to see which one wins. You're typically changing just one thing—a headline, a button, an image—to see what impact that single change has.

Multivariate testing is more like a round-robin tournament. It tests a whole bunch of changes all at once to find the winning combination. For example, you might test two different headlines and three different button colors simultaneously. This creates six different variations, and the test tells you which specific combination works best.

Can I A/B Test if I Don't Have a Ton of Traffic?

Absolutely, you just need to be smart about it. When you have low traffic, reaching that statistical significance mark is going to take a lot longer.

The key is to forget about tiny tweaks like changing a button from red to slightly-less-red. Instead, focus on making bold, high-impact changes. Test a completely different page layout. Try a radically different value proposition. A big swing is more likely to produce a big result, which is much easier to detect with a smaller audience. You'll just need a little more patience.

Ready to stop guessing and start building a website that actually converts? Alpha uses AI to help you create stunning, responsive websites in hours, not weeks. Our built-in analytics make it simple to track performance and understand your audience. Get started with Alpha today!

Build beautiful websites like these in minutes

Use Alpha to create, publish, and manage a fully functional website with ease.